Listening through the congregation: let AI help you learn from what they hear

An occasional essay

Photo by Larry George II on Unsplash

This is a story of a group who got together to improve the quality of preaching by listening to what their congregations were hearing. They represent half a dozen churches all from the New Frontiers movement, but it wasn’t an official study or even commissioned by New Frontiers, and it wasn’t a rigorous academic study either. The bottom line, however, is that you could copy it pretty easily.

The group grew slightly over the course of the experiment, and I’ve acknowledged everyone at the end with special acknowledgement of particular initiatives taken. However, everyone was very active in making this happen.

What do you need to know?

Turns out there isn’t a simple definition of good preaching that you can work into a questionnaire, from which easy answers pop out. The literature is knee-deep in ‘how to preach’ material from all kinds of angles. There’s plenty of guidance on how to run focus groups and run peer review systems, but we weren’t sure that would take us to where we wanted to go.

At the start, Mick Taylor and I ran a short survey presenting a preaching framework to gauge the appetite for a project in the area. It was sent to around 100 churches across the evangelical spectrum and received 26 responses – mainly from leaders involved in preaching.

Apart from indicating that our questions were not very clear (my bad), it revealed a minor paradox in that most respondents professed themselves satisfied with the preaching at church while also indicating an interest in doing better. Many had ongoing improvement programmes. Why was the ‘how to preach’ message in what’s already out there still leaving people a little hungry?

Personally, I wonder whether much of significance has been added since Sangster – a Methodist preacher – was writing in the ‘50s (see The craft of the sermon).

Some dead ends with frameworks

Having decided to start afresh, we began by looking for a framework of preaching against which individual sermons or even series and teaching plans might be assessed. Our first attempt was the slightly ungainly 4U Framework:

Understanding: how well it helps the listener understand the passage

Unpacking: how well it connected to wider concepts and everyday life

Utility: how useful it was in shaping well-rounded Christian behaviour

Unction: evidence of the Spirit’s anointing at the time

Turned out 4U was hard to use and so we cast around for other frameworks including ACTS:

Anointing: evidence of the Spirit’s activity in the preaching

Connections: evidence that the sermon connects at many levels

Techniques: evidence of good teaching methods for learning and retention

Scriptural: extent to which the preaching and teaching is grounded in the Bible

However, it soon turned out that it was possible to generate all kinds of frameworks quickly. Andy Mehigan (see list of acknowledgements) applied ChatGPT to the problem and soon emerged with an analysis of 9 teaching and preaching frameworks from Aristotle to Tim Keller. It also produced another acronym, REACH:

Rooted in Scripture: faithfulness to the text.

Engaging Delivery: captivating communication.

Application to Life: relevance and practicality.

Christ-Centred: gospel focussed.

Holy Spirit Empowerment: spiritual power and conviction.

Given the ease with which such frameworks may be generated, and the likelihood that churches might want to tailor their frameworks to their style, we abandoned the idea of grounding everything in a common framework and focused on a slightly different goal.

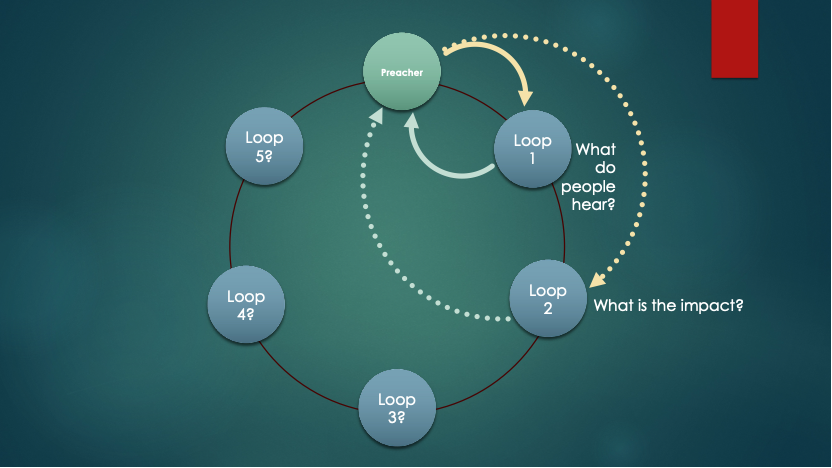

So, we explored the interaction between the preacher and the congregation – whatever quality framework the church concerned was using. In this example (loop 1), the preacher preaches (yellow arrow) and the congregation provides feedback (blue arrow). The aim of loop 1 is to enable the preacher (and perhaps an assessment team working alongside) to understand what is being heard by the congregation.

We can imagine a longer-term feedback loop in which feedback is provided about the impact of the preaching upon the congregation (loop 2). Further loops are possible in principle, such as:

Possible loop 3: What can the preacher learn from what the congregation knows?

Possible loop 4: What can the preacher learn from what the congregation learns?

Etc, etc.

The rest of this piece is about designing the loop 1 tools that help preachers and teachers understand what their congregations are hearing from the sermons.

Hearing what is heard

In a sense, we exchanged one beguilingly simple question (how good is the preaching and how can we nudge it towards better things?) for another (what has the congregation taken from the sermon and what can we learn from that?).

After further discussion, the group agreed to use three types of questions to probe what the congregation has heard from the preaching and teaching. This won’t of itself answer questions about the quality of the preaching but will provide evidence of what is being communicated that can be used by planning, training and delivery teams.

Heads, hearts and hands

In the end, the group agreed to probe 3 levels of reaction to a sermon:

Heads: what have congregants learned from the sermon?

Hearts: what was the emotional engagement and impact?

Hands: what do congregants plan to do about the message?

In the end, we agreed that each church would run around 5 services at which there would be an opportunity for people to answer the following 3 questions:

What was the main thing you gained from the message?

How did the message make you feel?

How relevant was the message to your life?

It wasn’t quite that simple, because one church was running an introductory series on who Jesus was and is and had altered the questions slightly. However, it’s close enough.

Ethics and data collection

The data was collected anonymously in two ways. Most were transcribed largely by hand from written submissions onto spreadsheets. However, a small sample (one church) used a QR code and SurveyMonkey to allow people to respond on their smart phones and this ensured an entirely digital capture process. Congregants had been informed that participation was voluntary and anonymous and that the analysis would be done by third parties.

In the event, in scanning and e-mailing out the data, it wasn’t possible to have total invisibility by local teams of the raw (but unnamed scripts). However, the analysis was done by two people – Martin Bradley (see below) and me – who were not involved in any of the preaching, nor were either of us members of churches running the preaching series and collecting the data. The other route for analysis was using an AI engine.

One church set aside a reflective 5 minutes during the service and asked people to answer the 3 questions as part of their engagement. Where a QR code was used it was not possible to say exactly when the questions were answered. There was evidence that some forms had been filled in during the following week, but most were filled in on the day.

The vast majority of the responses (529 out of 564) were on paper and the vast majority of these were transcribed by the two independent assessors, as noted above. Cut-and-paste on an iMac worked for some of the handwriting, although the scripts were extremely variable, with people adding sketches, writing around corners and up margins and including crossings out. Also, many of the respondents were not native English speakers, so spelling and syntax were variable.

The big learning for us was not to use handwritten input!

Analysis

Since we didn’t really know what would come back, working out ahead of time how to analyse the responses was tricky.

The most obvious analysis is for the preacher to read the anonymised feedback from the congregation. However, we felt that some form of scoring system could also add value.

Just giving preachers a numerical feedback score would lose all the richness of the comments, and not give much of a message other than ‘could do better’ or ‘did better than’...

In the end, I went through all of them quickly, responding in each case to the question: is there evidence that this person has engaged {intellectually, emotionally, practically} with the sermon or talk?

Having played with various ideas, I settled on a simple 0 (not enough evidence to say) or 1 (enough evidence to say), which lead to a series of ‘alignment factors’ that represented the fraction of responses that showed the originators had engaged.

Meanwhile, Andy Mehigan (see acknowledgements below) had been exploring the use of Notebook LM and Chat GPT to get a handle on the question.

The first pass attempt was suspect, because it turned out that only around 25% of the scripts were readable by AI. However, re-running the analysis with a table of digitised scripts (from the handmade transcription) showed that AI engines, using complementary assessment tools, could yield excellent insight.

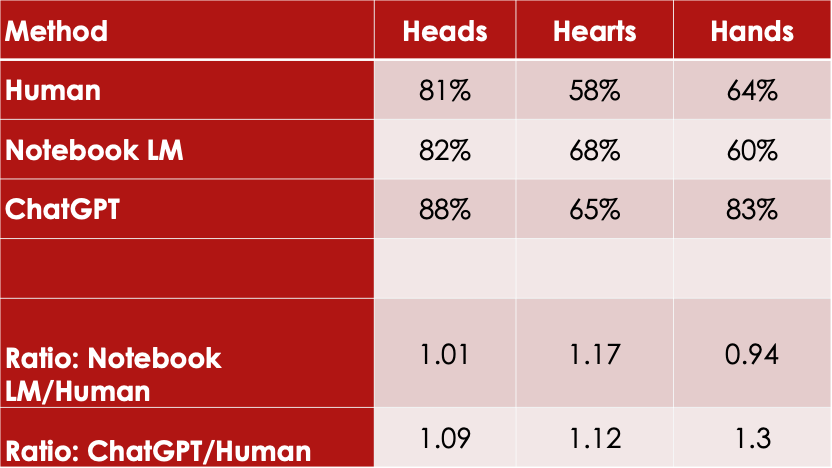

We ran a comparison for a set of 86 responses (using the same table of responses as input data in both cases) and got the following comparison between two AI engines and a human. The two lines at the bottom are the ratio of the Notebook LM score divided by the human, and the ChatGPT score divided by the human score.

It’s normal to include a ‘do not do this at home’ warning, but actually, we’re hoping you might do just that. In single-person ministry teams, it could be dangerous because it might provide the main preacher with a lot of information about individuals in the congregation, which might prove unhelpfully candid on the one hand, or reveal personal information about congregants on the other.

Therefore, if you try it at home, it must be implemented as a team activity with appropriate protection in place.

It would also be dangerous to present something this simple in a PhD dissertation, for instance. However, there is broad agreement between the human and the two AI options (good to within an average of 20% for the alignment score). Since it is still early days and we’re looking for trends, this would be good enough to be getting on with.

In addition, it is not difficult to get an AI engine to review a set of responses and provide a list of themes that the talk addressed. As well an enhancing the anonymity of respondents, this allows congregational responses (ideally captured through smartphones rather than on paper) to be turned aorund in near real-time.

This would provide:

A set of alignment factors, for how well the congregation engaged intellectually, emotionally and practically with the talk

A thematic analysis of the talk through the ears of the congregation, with examples of the comments that were made.

Feedback

To maximise the benefit and keep people engaged, it’s important to take all findings back to the congregation. I believe this was done to a limited degree, but not systematically.

How often?

Clearly, asking for feedback could become a drag if everyone filled in responses every week, unless it were integrated into the service and served an additional role – in aiding reflection, for instance. An alternative would be to ask a cross-section of the congregation (a real cross-section, not a mix of the leadership’s friends – or enemies!) to take the task on for a month at a time and draw those groups into the discussion of preaching.

Utility

Don’t forget, this is the first small step in the process of listening through the congregation’s ears. It won’t transform preachers on its own, but it may fuel a conversation across preaching teams.

If you’d like to try these ideas out, get in touch and I’ll help all I can!

Acknowledgements

I’d like to thank and acknowledge the rest of the team for their contributions, their fellowship during this experimental phase, and their thoughtful assessment throughout. Martin, in particular, was very thorough in his proofing. All errors and problems with this post are my own.

Thanks, then to: Martin Bradley who worships at Ascot Life Church; Ollie Elliot from Open Door Church, Sunbury; Barney Hall from Gateway Church, Ashford; Andy Mehigan from Westminster Chapel, London and Mick Taylor from Grace Church, Exeter.

Like this? More occasional theological reflections

Faith, hope and love in Hebrews (11 May 2025)

Local leadership: what’s your model? (13 October 2025)

Hermeneutics and clay (3 November 2025)

Holiday reading: a 3 minute guide to Acts with 1 chart (22 December 2-25)

Neurodiversity and church (29 December 2025)